Why horizontal scaling increases the need for caching

Horizontally scaling a web app has become a total no-brainer in the last few years. PaaS offerings like Heroku allow you to add a “dyno” with the click of a button and container technology such as Docker has enabled the same ease to scale your your own deployment. By now it has become a standard in all mayor cloud providers such as AWS to allow you to auto-scale your app.

Given how easy it is to add another container whenever traffic increases and performance starts to take a hit, it is tempting to assume there’s no longer a need for a Memcache. In reality, quite the opposite is true: the more we scale an app horizontally, the more we need and can benefit from caching.

The reality is, horizontal scaling and caching improve performance in two very different ways. Horizontal scaling multiplies your app so that each app has to serve less users. Using a Memcache, on the other hand, targets very specific bottlenecks: caching expensive database queries, page renders, or slow computations. As such, they are best used together.

Let’s explore two types of bottlenecks that horizontal scaling is ill equipped to handle (or may even exacerbate). I call them architectural bottlenecks and procedural bottlenecks. Hopefully, you will see why horizontal scaling is best combined with a dedicated Memcache server, cluster, or managed service like MemCachier.

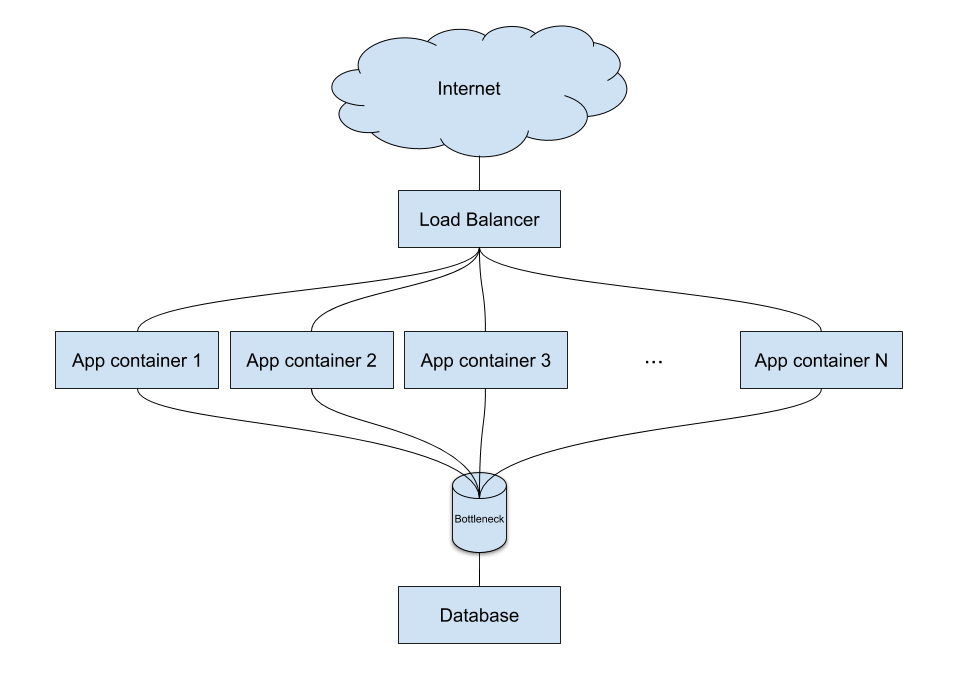

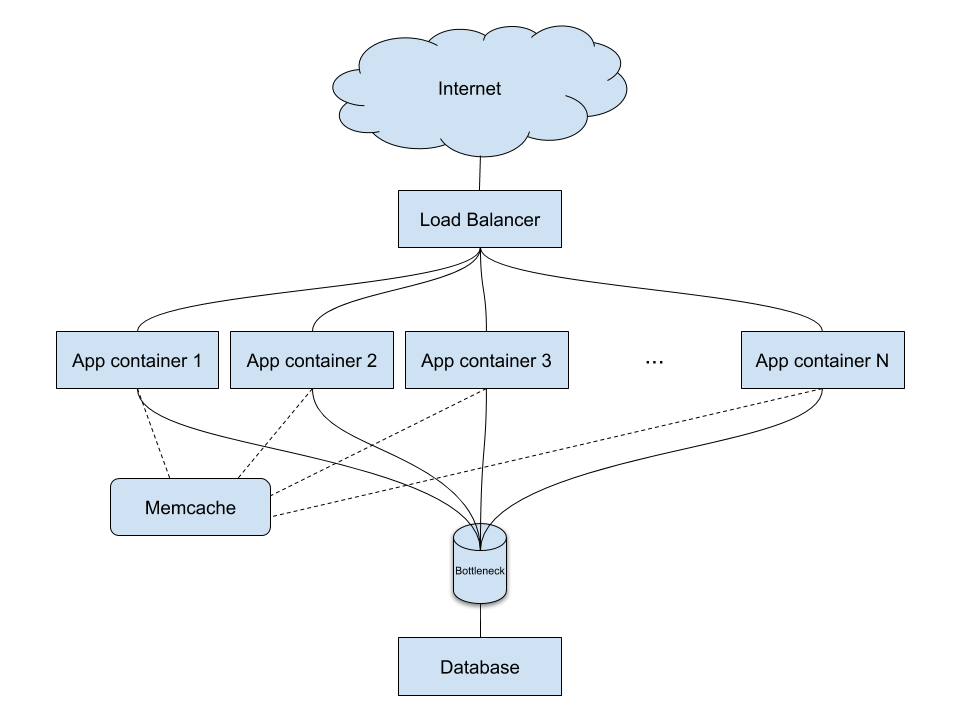

Alleviate an architectural bottleneck

Scaling an app horizontally is easy. However, other components in your architecture, such as your database, usually are not. Scaling a database horizontally is complex and can be very costly. To make matters worse, the more you scale your app by adding containers, the more likely it is the database will become a bottleneck.

For such an architectural bottleneck, it is much simpler and cheaper to cache the most expensive queries in Memcache.

Why is Memcache not a architectural bottleneck?

One might think that adding a Memcache server is just moving the problem to another service. The Memcache server appears to be an architectural bottleneck just like the database.

There is a few reasons this is not the case. First of all, Memcache is fast. As such, a Memcache server can take orders of magnitude more traffic than a similarly sized database server.

Furthermore, Memcache can easily be clustered (scaled horizontally) by combining multiple Memcache servers. If you use a service like MemCachier, you won’t even have to worry about this because every cache can be seamlessly scaled and automatically receives more proxies the larger it gets.

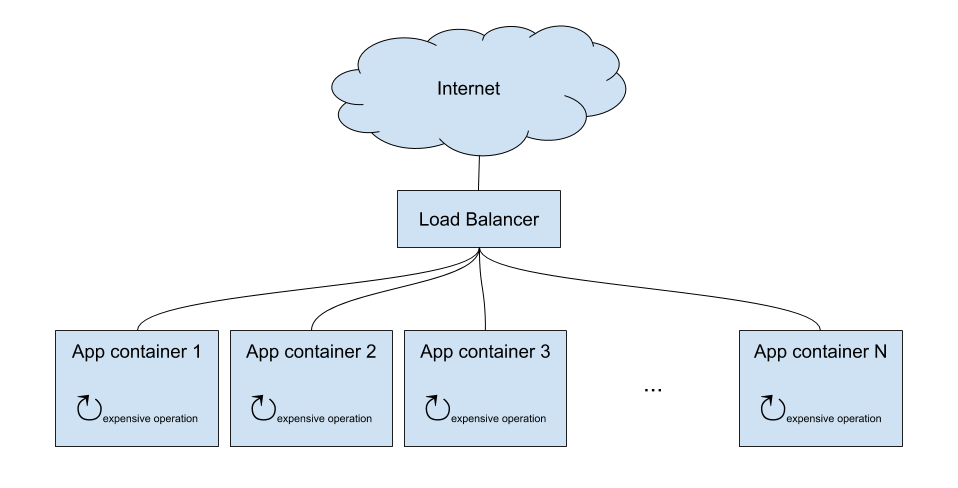

Alleviate a procedural bottleneck

Another type of bottleneck, which I call procedural bottleneck, can be present within the app. This kind of bottleneck is much subtler because horizontal scaling does in fact have an alleviating effect on it. However, a procedural bottleneck forces you to add way more containers for the same amount of traffic.

For example, an expensive computation is a procedural bottleneck. No matter how many instances of your app you have, each one will need to execute this expensive computation.

In this case, the bottleneck is the CPU. Your requests are slow, even for low traffic and an under-utilized network connection, because they have to wait for the CPU to perform the expensive computation.

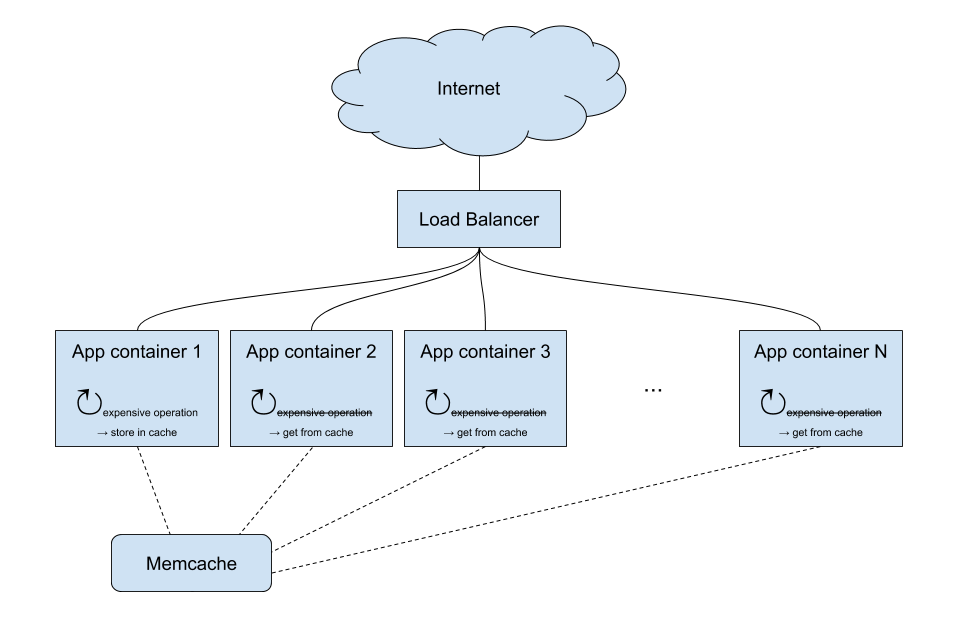

Using Memcache to address a procedural bottleneck can greatly reduce the number of instances of your app that you would need to create otherwise. It allows you to cache the result of the expensive operation, which offloads the bottleneck caused by the CPU. As a result, your app can now serve more requests with fewer instances.

Horizontal scaling and caching - a match made in heaven

Horizontal scaling and Memcache are a great fit, especially to overcome procedural bottlenecks. Even more so when you use a dedicated Memcache server or external service such as MemCachier. With such a setup, an expensive operation has to be performed by just one instance of the app, and can be cached. All other instances will then immediately benefit from the cached result, and are spared from doing the work themselves.

With this architecture, the more you scale horizontally, the more you benefit from Memcache.

If you have any questions about using Memcache for a horizontally scaled app (or anything else cache-related!) please leave a comment and one of MemCachier’s engineers will get back to you.

Sascha

Sascha